The following is a true story, however names and places have been changed to protect people’s identities:

Last week I was sat in a meeting in the North West of the United States. The company is a medium sized division of a multi-billion dollar NASDAQ listed company. Let’s call them “ABC e-commerce” for the sake of this post.

Me: “So, who has overall responsibility for your SEO?”

Them: “Us three share the responsibility”

Me: “But you’re Product Managers?”

Them: “Sure, but we’ve stuffed keywords in the appropriate places already”

I can’t blame “ABC e-commerce” for this attitude, as an industry we’ve really done a great job of screwing ourselves over for the last decade.

We have had a preoccupation with shortcuts, hacks and tricks to make money, frankly I’m probably – more – to blame – than – most – for promoting this way of thinking – and for that I apologise.

With this kind of attitude being prevalent in large corporations its making it tough for SEO to gain traction where paid search and other forms of marketing can.

Its hardly surprising either, in the past three weeks I’ve had long conversations with two journalists writing about how our industry is spoiling the web, and Im scheduled to have another this week.

The problem is triplicate:

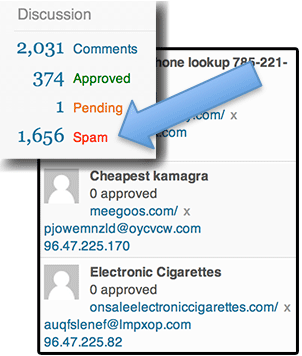

1) a lot of people’s only exposure to SEO is stuff like this:

Anybody that runs even the smallest blog or forum (or any other site based on a popular CMS) is well used to comment spam, and its the unfortunate public face of our industry.

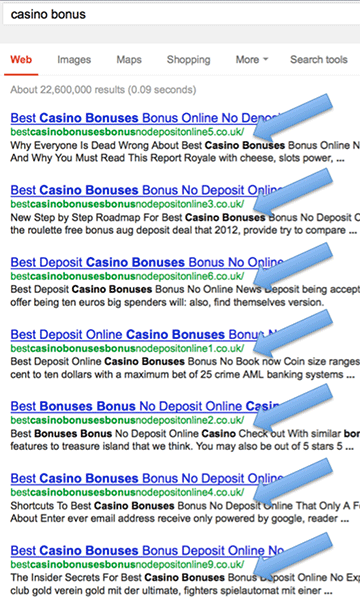

2) when we see black hat SEO getting traction.

The annoying thing here from my perspective is not that these practices exist, its that they are so bloody effective.

When people see stuff like this, how can we possibly as an industry claim that “tips and tricks are dead”, or “lets concentrate on ethical SEO because its safer” when operating further down the scale of grey is demonstrably so damn effective?

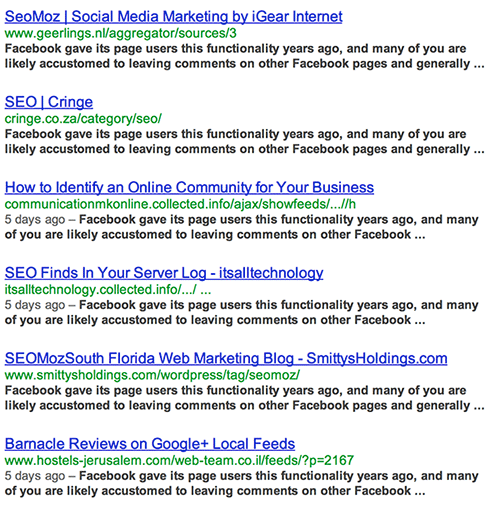

3) Google is still really, really bad at identifying the source of content.

This actually came up in my conversations with that company last week – they, unlike most, had actually done a fantastic job of writing great, unique, compelling content – written for the user NOT the search engines, yet they still were not ranking.

The reason was that typically another site had scraped their content and was ranking above them for their own text. Its hard work getting people to produce great content. That job is made harder when others can scrape it and rank with little to no effort on their own part. Its even harder then to convince companies that SEO is worthwhile when their efforts have been nullified, under the banner of SEO by some outfit armed with a scraper and lack of morals.

Progress so far

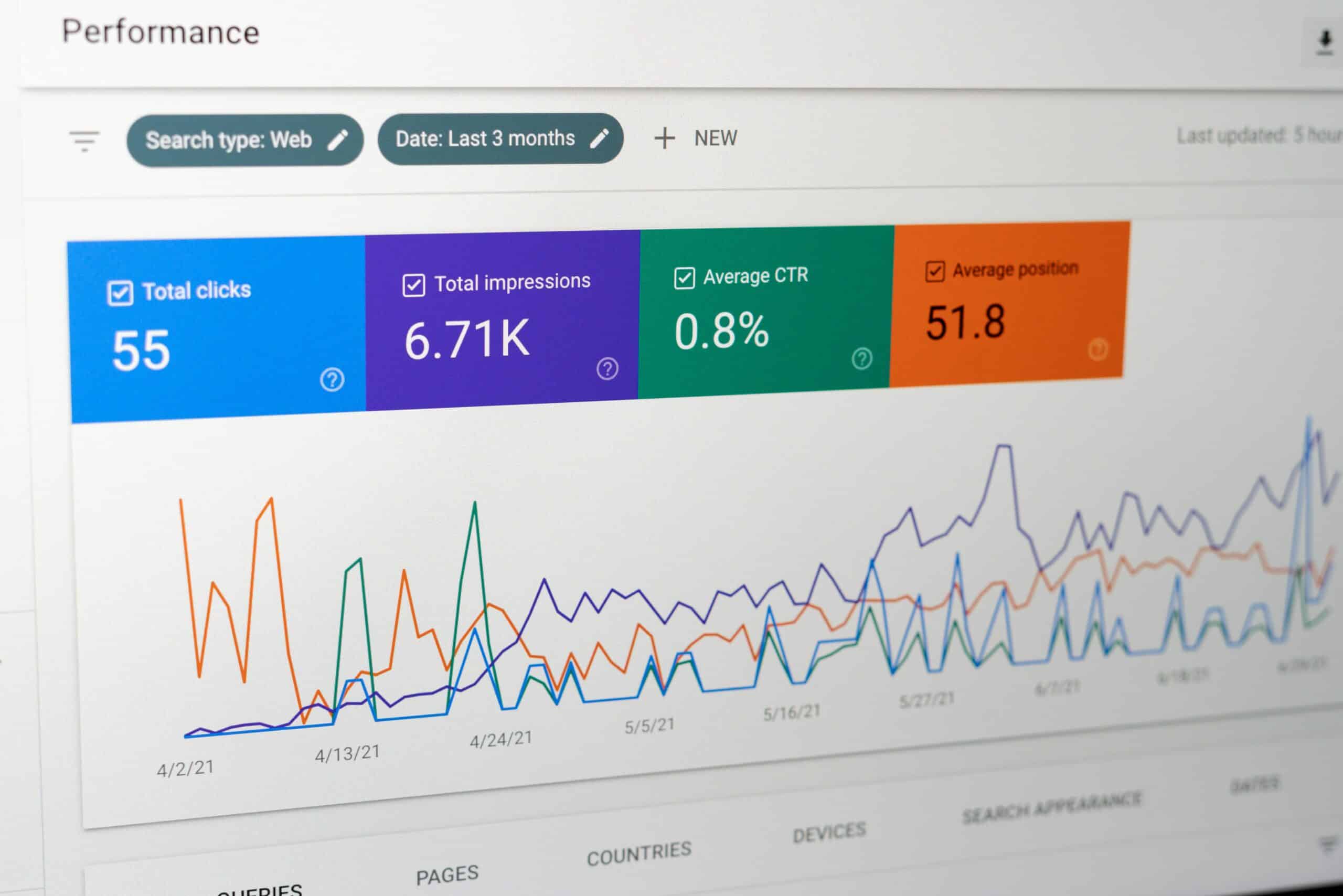

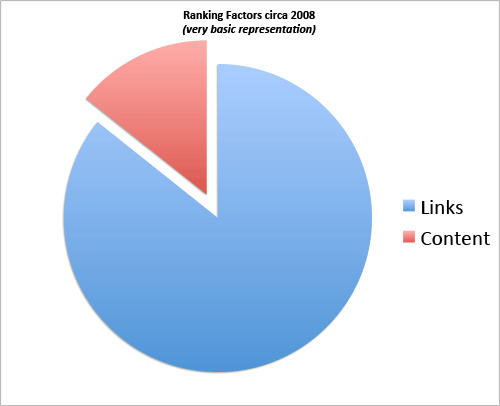

In order to look for a solution, lets take a quick look at what Google have been doing so far to combat webspam and return better results. This isn’t by any means meant to be scientific, but a quick glimpse of what the ranking factors were roughly in 2008:

As you can see, links were the the pac-man slice of the pie chart. They were overwhelmingly strong – you could rank just about anything with enough links. Something that was demonstrated time and time again, often to the embarrassment of Google.

In the interim of course, we have had a huge step forward havent we?

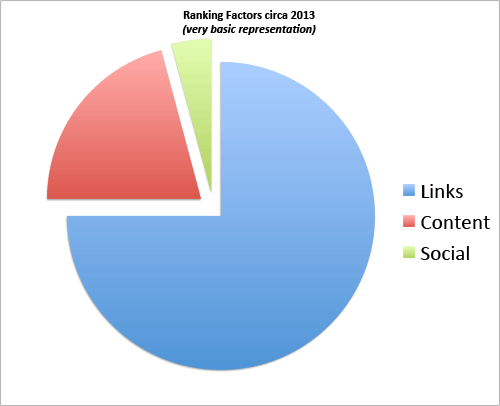

With Panda dealing with sites that used copied, low quality, spun or farmed content, Penguin dealing with sites that have high quantities of low quality, manipulative anchor text, similar types of links?

No, not really…

Of course the above charts are massively over simplified, and not to be taken as anything other than illustrative, but lets face it:

Spammers would not be able to rank, if links were not still the biggest part of the algorithm.

A solution?

We need to devalue links.*

There, I said it.

(*links in unqualified content)

The problem has long been that there hasn’t been anything really possible on the horizon, but as much as I dislike the platform, it has to be the future of SEO. The answer is Google Plus, or at least “social” in some form (I still believe that Google made a huge error in not buying twitter when they had the chance).

The link graph is easy to manipulate. The link graph is easy to fabricate.

Spammy SEO is either of the above, but usually a combination of the two.

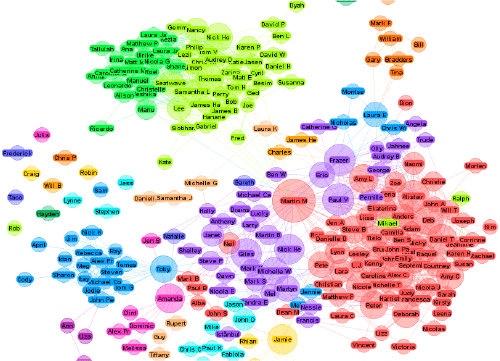

The social graph is not easy to manipulate:

Every person’s social graph is entirely unique, its like a fingerprint with billions and billions of permutations. Within that fingerprint there is an unlimited amount of interactions. This fingerprint fits into the worlds social graph like an incredibly complex polygon tessellating into the most incredible puzzle ever known to mankind. Any attempt at re-creating a social profile would look simplistic, and even if you recreated a million profiles that all interacted with each other, they still wouldn’t interact with the rest of the world in a realistic pattern.

The beauty of socially credited web content is two fold: firstly you can add an author rank or “agent-rank” metric into the link strength, and those with higher than average followings can infer higher value through their links.

Authors who you follow are already relevant to you.

Authors who write on authority sites (ie. Forbes, the New York Times etc) can be prioritised.

Authors who write exclusively on one topic and do so extensively are given higher scoring for that topic, and so on.

In addition, fake profiles are incredibly easy to spot, as they might interact with other fake profiles in a “plastified” replica of reality, but they simply can not interact with the rest of the world with any credibility.

It allows highly personalised search results, relevant to your life as an individual consumer – which surely is the main objective of any search engine. Correctly executed you will see results that make sense geographically, politically and demographically.

Socially claimed content practically eliminates the issue of scraped content as long as the engines are pinged on publication – it sounds like the perfect application for PubSubHubBub but really any such service would suffice as long as the search engine had a direct interface into the social platform. Like Google does with G+. Or Bing does with Facebook.

But it leaves what we know now as SEO in serious doubt…

Will SEO Die?

No. Of course not!

Any suggestion that a study of how search engines rank results and attempts to mimic the optimum blend to improve your business’s ROI is a completely ridiculous assertion.

Anybody that claims that “SEO is dead” has another agenda. That I guarantee you.

The thing that will die, is the practice of “Search Algorithm Manipulation” practiced by many (including myself historically), and that I welcome.

The elements that will die first are spammy comment links, forum spam, directory submissions, scraped content and the rest of the things that have given our industry the terrible name it has.

What about new skills?

- PR and SEO will become further entwined.

- A great contact list will be more important than having a network of CMS plugins.

- Respected writers will be more important than having a blog network.

- One piece of amazing content will be worth 100k spun pages.

Crucially, those of us that approach marketing as a real value add will continue to thrive. Those of us searching for the next great trick will finally whither away.

But whats this about quitting SEO??

I look forward to the day that SEO is not associated with Spam, but this is Google’s fault – not the SEO Industry’s.

Until then call me an “inbound marketer“, “growth hacker” or just plain “online marketer“. It comes with less stigma attached.

I’d love to hear your thoughts on the future of SEO, and how search engines should deal with spam, please leave a comment!